Remote SSH to Dataplex from FEP

- Details

- Hits: 11838

Access to gpu.grid.pub.ro will be done through our frontend (fep.grid.pub.ro). A new queue was created (ibm-dp.q) in which all the Dataplex machines were added (dp-wn01:dp-wn04).

Console access was preserved and is done through "qlogin" program:

If you want to choose a specific machine:

X11 forwarding is also active.

You can reserve multiple queue slots (up to 24 for a machine) using "-pe" option. If you reserve 24 slots, you are sure that nobody can login to that machine:

Module load not working in bash

- Details

- Hits: 11646

module load not working in bash

workaround: load environment manually.

. /opt/modules/Modules/3.2.5/init/bash

for example:

#!/bin/bash

. /opt/modules/Modules/3.2.5/init/bash

module load java/jdk1.6.0_23-64bit

module load libraries/hadoop-0.20.2

[[ ! -d build ]] && mkdir build;

javac -classpath ${HADOOP_HOME}/hadoop-${HADOOP_VERSION}-core.jar \

-sourcepath src \

-d build \

src/org/myorg/WordCount.java

jar -cvf wordcount.jar -C build/ .

You need this only if you're running this on fep.grid.pub.ro. The worker nodes do not need this.

Original link: http://www.unix.com/unix-advanced-expert-users/74588-makefile-problem-how-run-module-load-makefile.html

How to use Yum VersionLock to keep specific versions of your kernel

- Details

- Hits: 9446

I never seem to find one site that gives all the answers in using yum versionlock.

[root@quad-wn16 ~]# rpm -qa --queryformat "%{NAME}-%{VERSION}-%{RELEASE}\n" | grep kernel

kernel-lustre-devel-2.6.18-128.7.1.el5_lustre.1.8.1.1

kernel-xen-devel-2.6.18-128.7.1.el5

kernel-2.6.18-128.1.1.el5

kernel-2.6.18-164.9.1.el5

kernel-lustre-2.6.18-128.7.1.el5_lustre.1.8.1.1

kernel-ib-1.4.2-2.6.18_128.7.1.el5_lustre.1.8.1.1

kernel-headers-2.6.18-164.9.1.el5

kernel-devel-2.6.18-128.7.1.el5

[root@quad-wn16 ~]# vi /etc/yum/pluginconf.d/versionlock.list

kernel-2.6.18-128.1.1.el5

kernel-2.6.18-164.9.1.el5

kernel-lustre-devel-2.6.18-128.7.1.el5_lustre.1.8.1.1

kernel-xen-devel-2.6.18-128.7.1.el5

kernel-lustre-2.6.18-128.7.1.el5_lustre.1.8.1.1

kernel-ib-1.4.2-2.6.18_128.7.1.el5_lustre.1.8.1.1

kernel-headers-2.6.18-164.9.1.el5

kernel-devel-2.6.18-128.7.1.el5

That's it.

HowTo create KVM Virtual Machines on the cluster

- Details

- Hits: 13001

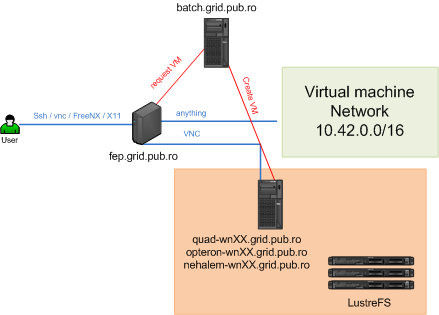

This is a howto for creating KVM virtual machines on the NCIT-Cluster. You can run any virtual machine that has a qcow2 disk and you can use how many processors and memory you wish without disturbing the other users.Because we're using KVM you will have VNC access to the virtual machine and an IP in 10.42.X.X/16.

Objectives

You have a lab virtual machine and you want to run several instances of that virtual machine, and after your you're done, you want to revert to the main virtual machine. You can achieve this by using a master vm instance. You setup this lab into this master instance, shutdown the virtual machine and run as many copies you need by using KVMs copy-on-write options. KVM will use your harddisc as a readonly file, and write all diffs to another file. If you need to update the lab machine, shutdown all instances, delete the instance files, and just update the master machine and you're done.

Virtual Infrastructure Architecture

Basically you use the SunGridEngine batch system to reserve resources (cpu and memory). All virtual machines are hosted on LustreFS, so you have an infiniband connection on the opteron nodes and 4x1Gb maximal total throughput if running on the other nodes. Total cores available: 380 (for now).

[alexandru.herisanu@fep-53-1 ~]$ qstat -g c

all.q 0.52 0 0 180 380 200 0

ibm-nehalem.q -NA- 0 0 0 0 0 0

ibm-opteron.q 0.77 120 0 36 168 0 12

ibm-quad.q 0.34 80 0 144 224 0 0

Setup

First of all you need to be in the kvm-user group. There are some templates available, but you can use any KVM machine. The templates are located into /d02/home/ncit-cluster/kvm-templates.

There are two available templates:

- Oracle RHEL 5.2 64 bit

- Debian 5 with two OpenVZ containers

Copy one of those to your home folder ~/LustreFS. This wil copy it into your Lustre share (email us if you can not acces it).

It should look like this:

[alexandru.herisanu@fep-53-1 ~]$ id

uid=9552(alexandru.herisanu) gid=9000(profi) groups=514(app-gaussian03),515(vtune),518(kvm-user),9000(profi)

[alexandru.herisanu@fep-53-1 ~]$ cd LustreFS/virtual_machines/db2-image/

[alexandru.herisanu@fep-53-1 db2-image]$ ls

db2-hda.qcow2 hda-inst_ABDLab.qcow2

db2-hdb.qcow2 hdb-inst_ABDLab01.qcow2

db2-image.sh hdb-inst_ABDLab02.qcow2

Enterprise-R5-U5-Server-x86_64-dvd.iso hdb-inst_ABDLab.qcow2

hda-inst_ABDLab01.qcow2 out

hda-inst_ABDLab02.qcow2 start_machines.sh

There are several important files:

- db2-hda.qcow2 - your hdd

- hda-inst_ABDLab01.qcow2 - this is a copy on write instance of your hard disk. db2-hda will be a read-only copy, this file will contain only the diffs. Delete it and you revert you machine to the original one.

- start_machines.sh - your start script

- out - stdout directory for the batch system.

Setup your start_machines.sh startup script.It looks like this:

#!/bin/bash

##

## Master Copy

#

#apprun.sh kvm --queue

This email address is being protected from spambots. You need JavaScript enabled to view it.

--vmname ABDLab --cpu 2 --memory 2048M --hda db2-hda.qcow2 --hdb db2-hdb.qcow2 --cdrom Enterprise-R5-U5-Server-x86_64-dvd.iso --status status.txt --mac 52:54:01:12:34:01 --vncport 0 --master

##

## Slave Copy

#

apprun.sh kvm --queue

This email address is being protected from spambots. You need JavaScript enabled to view it.

--vmname ABDLab01 --cpu 2 --memory 2048M --hda db2-hda.qcow2 --hdb db2-hdb.qcow2 --cdrom Enterprise-R5-U5-Server-x86_64-dvd.iso --status status.txt --mac 52:54:01:12:34:01 --vncport 1

apprun.sh kvm --queue

This email address is being protected from spambots. You need JavaScript enabled to view it.

--vmname ABDLab02 --cpu 2 --memory 2048M --hda db2-hda.qcow2 --hdb db2-hdb.qcow2 --cdrom Enterprise-R5-U5-Server-x86_64-dvd.iso --status status.txt --mac 52:54:01:12:34:02 --vncport 1

Let me explain (you only have to write this once):

apprun.sh kvm --queue This email address is being protected from spambots. You need JavaScript enabled to view it. --vmname ABDLab --cpu 2 --memory 2048M --hda db2-hda.qcow2 --hdb db2-hdb.qcow2 --cdrom Enterprise-R5-U5-Server-x86_64-dvd.iso --status status.txt --mac 52:54:01:12:34:01 --vncport 0 --master

- apprun.sh kvm - this is our wrapper script over sge

- --queue: where do you want to submit this machine to. You can use any queue, or you can also specify the host. ibm-quad.q, selects any host from this queue. This email address is being protected from spambots. You need JavaScript enabled to view it. - you want exactly that host.

- --vmname: must have a name

- --cpu: number of cpu's. quads have 2*4 cores, opterons 2*6, nehalem 2*8 cores available

- --memory: quads and opterons have 16Gb of memory, nehalems have 32Gb

- --hda: first harddisk in qcow2 format

- --hdb: [optional] secondary harddisk

- --cdrom: [optional] cdrom iso

- --status: will be uses (maybe)

- --mac: you MUST specify the full mac address. choose it so you don't overlap with others. It's best if you submit the mac range you use, so you get "static" ip addresses through DHCP

- --vncport: you MUST specify this so you do not overlap with anybody. It's from 0-20.

- --master: specifies you want to run the main instance. You will modify the --hda file. If you don't specify this, then a new harddisk is created that only logs the diffs.It's named hda-VMNAME_instance.

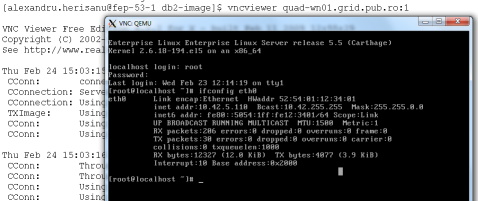

How do you connect?

You can connect to fep using SSH, VNC, FreeNX or SSH + X11 forwarding. From then on, if you know the ip of the machine, you can access it from fep, if not, you can connect to the machine using kvm like this:

[alexandru.herisanu@fep-53-1 db2-image]$ cat start_machines.sh | grep -v ^#

apprun.sh kvm --queue

This email address is being protected from spambots. You need JavaScript enabled to view it.

--vmname ABDLab01 --cpu 2 --memory 2048M --hda db2-hda.qcow2 --hdb db2-hdb.qcow2 --cdrom Enterprise-R5-U5-Server-x86_64-dvd.iso --status status.txt --mac 52:54:01:12:34:01 --vncport 1

apprun.sh kvm --queue

This email address is being protected from spambots. You need JavaScript enabled to view it.

--vmname ABDLab02 --cpu 2 --memory 2048M --hda db2-hda.qcow2 --hdb db2-hdb.qcow2 --cdrom Enterprise-R5-U5-Server-x86_64-dvd.iso --status status.txt --mac 52:54:01:12:34:02 --vncport 1

This is how my machine startup looks like. I will run two instances on two separate hosts.

[alexandru.herisanu@fep-53-1 db2-image]$ ./start_machines.sh

Checking /export/home/ncit-cluster/prof/alexandru.herisanu/.ncit-cluster/ ...

Using /srv/ncit-cluster/apprun/kvm as environment ...

Running custom run script ...

JobId: 6732

job-ID prior name user state submit/start at queue slots ja-task-ID

-----------------------------------------------------------------------------------------------------------------

6732 0.00000 ABDLab01-K alexandru.he qw 02/24/2011 15:01:26 2

Qsub command:

qsub -q

This email address is being protected from spambots. You need JavaScript enabled to view it.

-pe openmpi 2 -N ABDLab01-KVM -o out -e out -cwd /tmp/tmp.DPDOqGl533

Running script:

module load compilers/gcc-4.1.2

module load mpi/openmpi-1.5.1_gcc-4.1.2

sudo /srv/ncit-cluster/scripts/run-vm.sh --vmname ABDLab01 --cpu 2 --memory 2048M --hda db2-hda.qcow2 --hdb db2-hdb.qcow2 --cdrom Enterprise-R5-U5-Server-x86_64-dvd.iso --status status.txt --mac 52:54:01:12:34:01 --vncport 1 --user alexandru.herisanu

Checking /export/home/ncit-cluster/prof/alexandru.herisanu/.ncit-cluster/ ...

Using /srv/ncit-cluster/apprun/kvm as environment ...

Running custom run script ...

JobId: 6733

job-ID prior name user state submit/start at queue slots ja-task-ID

-----------------------------------------------------------------------------------------------------------------

6732 0.00000 ABDLab01-K alexandru.he qw 02/24/2011 15:01:26 2

6733 0.00000 ABDLab02-K alexandru.he qw 02/24/2011 15:01:26 2

Qsub command:

qsub -q

This email address is being protected from spambots. You need JavaScript enabled to view it.

-pe openmpi 2 -N ABDLab02-KVM -o out -e out -cwd /tmp/tmp.rhCEktl561

Running script:

module load compilers/gcc-4.1.2

module load mpi/openmpi-1.5.1_gcc-4.1.2

sudo /srv/ncit-cluster/scripts/run-vm.sh --vmname ABDLab02 --cpu 2 --memory 2048M --hda db2-hda.qcow2 --hdb db2-hdb.qcow2 --cdrom Enterprise-R5-U5-Server-x86_64-dvd.iso --status status.txt --mac 52:54:01:12:34:02 --vncport 1 --user alexandru.herisanu

[alexandru.herisanu@fep-53-1 db2-image]$

Great! They are up and running, and we can see we have those slots reserved. No batch jobs will harm us.

[alexandru.herisanu@fep-53-1 db2-image]$ qstat

job-ID prior name user state submit/start at queue slots ja-task-ID

-----------------------------------------------------------------------------------------------------------------

6732 0.51409 ABDLab01-K alexandru.he r 02/24/2011 15:01:34

This email address is being protected from spambots. You need JavaScript enabled to view it.

. 2

6733 0.51409 ABDLab02-K alexandru.he r 02/24/2011 15:01:36

This email address is being protected from spambots. You need JavaScript enabled to view it.

. 2

You know where you put the vm, so connect using vncviewer and get your ip. Or connect using your ip directly if you've set it up beforehand into dhcp.

How do I shut down the VM?

You can shutdown the vm from the virtual machine. shutdown - h now

Or you can use qdel

[alexandru.herisanu@fep-53-1 db2-image]$ qstat

job-ID prior name user state submit/start at queue slots ja-task-ID

-----------------------------------------------------------------------------------------------------------------

6732 0.51409 ABDLab01-K alexandru.he r 02/24/2011 15:01:34

This email address is being protected from spambots. You need JavaScript enabled to view it.

. 2

6733 0.51409 ABDLab02-K alexandru.he r 02/24/2011 15:01:36

This email address is being protected from spambots. You need JavaScript enabled to view it.

. 2

[alexandru.herisanu@fep-53-1 db2-image]$ qdel 6732

alexandru.herisanu has registered the job 6732 for deletion

[alexandru.herisanu@fep-53-1 db2-image]$

[alexandru.herisanu@fep-53-1 db2-image]$

[alexandru.herisanu@fep-53-1 db2-image]$ qstat

job-ID prior name user state submit/start at queue slots ja-task-ID

-----------------------------------------------------------------------------------------------------------------

6733 0.51409 ABDLab02-K alexandru.he r 02/24/2011 15:01:36

This email address is being protected from spambots. You need JavaScript enabled to view it.

. 2

[alexandru.herisanu@fep-53-1 db2-image]$

Voilla!

Heri

How to control your on Blade on NCIT BladeCenterChassis H

- Details

- Hits: 9506

Sometimes students get direct remote acces to a blade through the IBM AMM Management Interface.

For this, you need an account for the IBM AMM and acces to the management network (192.168.1.0/24). You first need to set up your VPN and add the route to the management network. As Administrator you should run:

route add 192.168.1.0 mask 255.255.255.0 192.168.2.1

The BladeChassis ips are: 192.168.1.61 - 64

You can also use the IBM BladeCenter Stand-alone Remote Console Utility (ibm_utl_sarc_1.1.0.8_anyos_noarch) to connect to the blade directly. The main IBM Support Portal link to this software is:

http://www-947.ibm.com/support/entry/portal/Downloads/Hardware/Systems/BladeCenter/BladeCenter_H_Chassis/8852/4YG (click download and fixes)

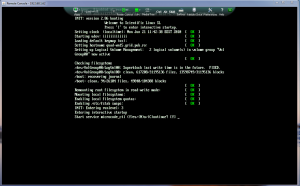

It should look like this: